Balancing Transparency and Autonomy to Build Trust in Autonomous Vehicles

Executive Summary

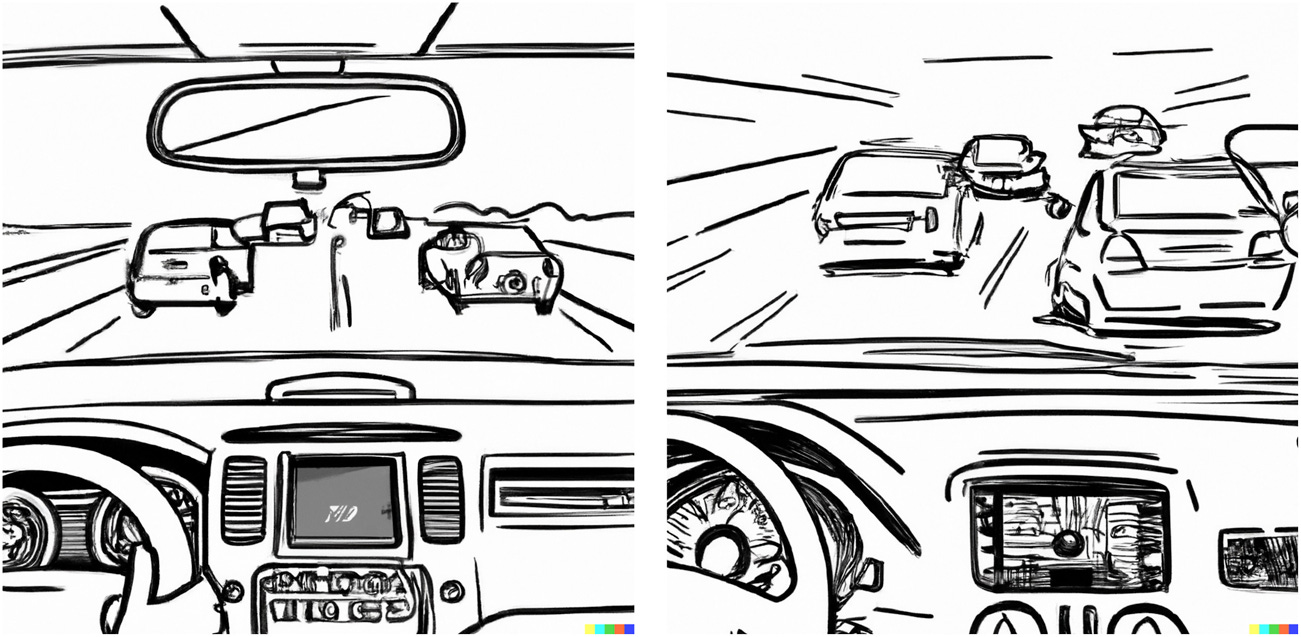

A study published in Frontiers in Robotics and AI in September 2025 by researchers at Uppsala University explored how transparency and autonomy affect human trust in autonomous vehicles. As self-driving technologies evolve from passive navigation tools into interactive agents capable of complex decisions, building user trust has become a defining challenge. The research, titled Information vs Situation: Balancing Transparency and Autonomy for Trustworthy Autonomous Vehicles, examined how different levels of information shared by vehicles influence drivers’ sense of comfort, control, and confidence. Using participatory design and large-scale online experiments involving 206 licensed drivers, the authors tested two key variables: the amount of information communicated by the vehicle (high vs low transparency) and the degree of autonomy users were willing to grant in different driving environments. Results showed that transparent vehicles that clearly explained their actions and reasoning generated higher trust and comfort, especially during uncertain or high-risk scenarios. However, the relationship between autonomy and trust proved complex: participants accepted high autonomy only when they felt adequately informed. The study concludes that successful deployment of autonomous systems depends not only on engineering reliability but also on psychological design. By aligning transparency, context, and control, developers can create vehicles perceived as safe collaborators rather than opaque machines.